Each cycle, as we approach the election, polling firms across the country publish polls that allegedly show voters’ attitudes about the direction of the country and their intentions on voting in November. Much of the time, those polls, despite having only a 3 or 4% margin of error, vary in result by double-digits. You can have two polls, allegedly of the same population, publish results that are different by 11 or 12 points. If methodology were treated as a science (since it literally is), it should reliably return a result within a few points each time. How can Rasmussen (among the most reliable of the 2016 polling firms) release a poll on September 22, showing Biden with a 1 point lead nationally while, at the same time, WaPo/ABC News (among the least accurate in 2016) can release a poll on the 24th, showing that Biden has a 10 point lead? Even if we factored for the biggest errors in either direction, there could be anywhere between a 14 point lead for Biden and a 3 point lead for Biden. An 11 point separation, on polls that supposedly poll the same people around the same time, come up with the same result? How can one poll have 2% undecided and the other 7% undecided?

While national polls leading up to the election are a fun little way of identifying the attitudes of the public, the closer we get to the election, the more these polls become meaningless and irrelevant.

The biggest problem when we look at national polls is, as is the problem with most polls, sampling. When taking a national poll, the only way to ensure that they are accurate is to ensure sampling from each state is proportionate to their electoral share of the vote in the Electoral College (EC). In other words, if Arizona makes up for 2% of the country’s population and 7% of your sampled voters, your result is likely not going to be anywhere near accurate. Why? Voters’ attitudes constantly and consistently shift between states. If suddenly 90% of your data is coming from New York and California, while the combined populations of those states only account for about 20% of the country’s population, your result is going to skew left. If your poll is using majority voters in heartland states, your poll is going to skew right.

Independents in one state may be more likely to vote Democrat, while they may be more likely to vote Republican in another. While the party and ideological attitude nationally may be one way, if it isn’t properly weighted according to a state’s weight in the EC, then your result will likely become skewed. Even national attitudes regarding party affiliation shift greatly during the months leading up to the election. A Gallup poll leading up to August 12 had Democrats with a 5 point lead nationally among voter’s self-identified party, while on September 13, that same attitude had narrowed to just a one-point lead.

The issue with an accurate national sample is that obtaining one is so cost-prohibitive and time-consuming that no firm will do one. It is easier for them to just randomly call or survey people online and then weight it to best of what they believe turn-out will be in November. That “methodology” is perhaps okay when it comes to an attempt to identify a general “feeling” of the country but shouldn’t be used, in any way, to determine an actual national polling result.

Another issue that national polls face is turn-out models. In the most recent NY Times/Siena poll, 11% of the “likely” voters didn’t vote in 2016. While voter attitudes may have changed and new voters may have been registered (which there’s no way of determining the propensity for new voters as they literally don’t have a history of voting behavior) there’s no way that 11% of likely voters, didn’t vote in 2016. Since 2008, Presidential voter turn-out has decreased 2% (Voting Age Population to Total Voters) to suggest that turn-out is going to increase 11% this year is asinine.

Nate Silver and Real Clear Politics are examples of two places (though not polling firms, but they use polling firm data) that get the idea. They both provide maps, based upon the electoral college, that determine the result of a national election. While we run into the same problems with sampling in each of the states (as I have previously written about here at RedState), at least those maps sample based upon potential EC result as opposed to the meaningless national vote

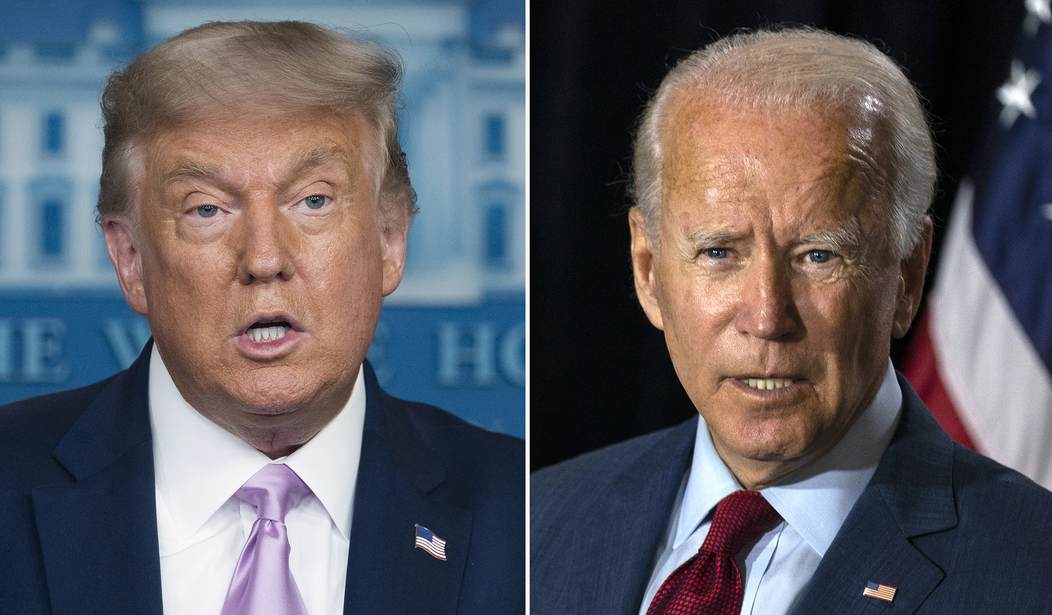

Before October 1st, National Polls have been a novelty that has been a “cute” way of determining public opinion. They are wildly inaccurate and in no way reliable. After October 1st, they become less of means of measuring public opinion and more of a way of shaping public opinion. My advice (and what I will begin doing weekly here at RedState), is to examine the battleground state polls. Look at sampling. Does it match both registration and prior turn-out? If not, what would the poll say if it did? Take a look at RCP’s “Make Your Own” map section. Toy with your own maps. Trump is in a much better position to win reelection than the polls make it out to be.

Join the conversation as a VIP Member