If George Orwell were still alive, he might write something like “in Facebook’s eyes, all users are equal. But some users are more equal than others.” A recent report revealed that the social media company treats more high-profile users more leniently when it comes to violations of their terms of service than the rest.

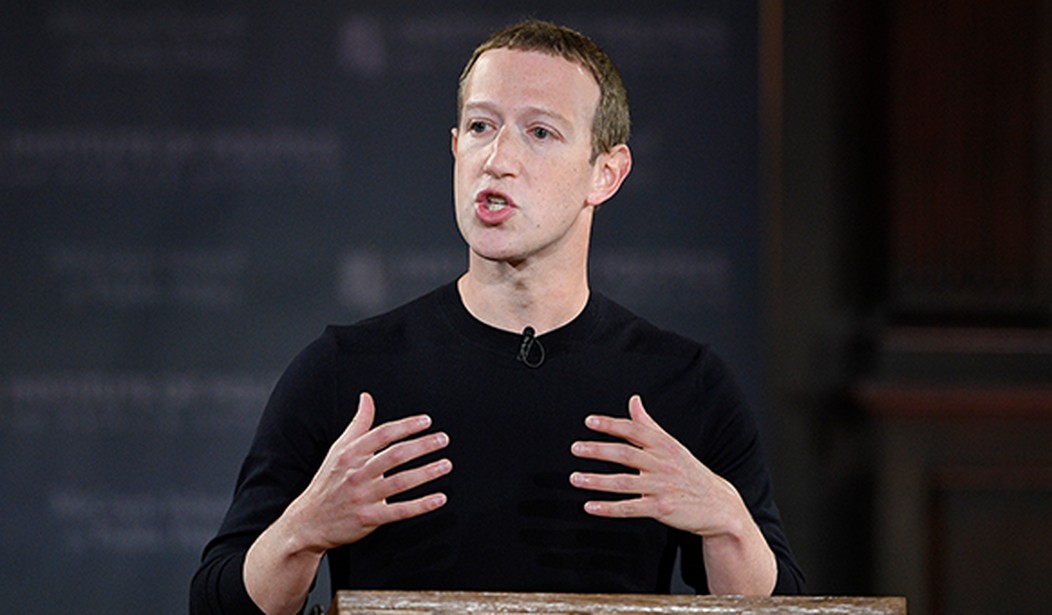

The Wall Street Journal published a report noting that while Facebook CEO Mark Zuckerberg publicly claimed the company allows its users to communicate “on equal footing” with more prominent users, “the company has built a system that has exempted high-profile users from some or all of its rules.”

According to documents reviewed by the Journal, Facebook created a program called “cross check” or “XCheck” which was originally intended to be a quality-control method to evaluate complaints lodged against prominent users. Politicians, celebrities, journalists, and other high-profile individuals benefitted from the program. WSJ noted:

Today, it shields millions of VIP users from the company’s normal enforcement process, the documents show. Some users are “whitelisted”—rendered immune from enforcement actions—while others are allowed to post rule-violating material pending Facebook employee reviews that often never come.

The documents revealed that XCheck “has protected public figures whose posts contain harassment or incitement to violence, violations that would typically lead to sanctions for regular users.”

For example, in 2019, it shielded Neymar, a Brazilian soccer superstar, who posted nude photos of a woman who accused him of rape. While the post was later taken down by Facebook, he has not had his account terminated even though his violation would get a normal person removed from the platform.

Others who were also “whitelisted” shared content that Facebook’s fact-checkers found to be false. This includes posts claiming that COVID vaccines are deadly, Hillary Clinton shielded “pedophile rings,” and that former President Donald Trump had referred to asylum seekers as “animals.”

An internal review found that the program resulted in brazen bias in favor of the elite who used the platform. The report noted that the company is “not actually doing what we say we do publicly “and that this constituted a “breach of trust.” It added:

Unlike the rest of our community, these people can violate our standards without any consequences.

The XCheck program has ballooned to 5.8 million users in 2020. “In its struggle to accurately moderate a torrent of content and avoid negative attention, Facebook created invisible elite tiers with the social networks,” according to WSJ.

Even more stunning is that Facebook has not only deceived the public about its treatment of elite accounts, it did the same to the Oversight Board it created ostensibly to keep the company accountable with its enforcement systems. In June, the company deceptively told the board that its program for high-profile users was implemented in only “a small number of decisions.”

The Journal noted that some of the documents it reviewed have been submitted to the Securities and Exchange Commission (SEC) and Congress “by a person seeking federal whistleblower protection.”

Zuckerberg in 2018 indicated that Facebook gets about 10 percent of its content removal decisions wrong. In many cases, users are not told which rule they violated or given an opportunity to appeal a decision. However, this is not the case for elite users.

From WSJ:

Users designated for XCheck review, however, are treated more deferentially. Facebook designed the system to minimize what its employees have described in the documents as “PR fires”—negative media attention that comes from botched enforcement actions taken against VIPs.

If Facebook’s systems conclude that one of those accounts might have broken its rules, they don’t remove the content—at least not right away, the documents indicate. They route the complaint into a separate system, staffed by better-trained, full-time employees, for additional layers of review.

One of the issues with the program is that too many employees are able to add users to the XCheck system. A 2019 audit showed that at least 45 teams had the ability to whitelist users. Kate Klonick, a law professor at St. John’s University, told the Journal that the documents show that the company deceived the Oversight Board. “Why would they spend so much time and money setting up the Oversight Board, then lie to it,” she said, asserting that “this is going to completely undercut it.”

The documents do show that Facebook is trying to do away with whitelisting. They indicated the company sought to eliminate complete immunity for “high severity” violations of its rules in the first half of 2021. However, it appears that by March, the company was experiencing challenges in that regard.

The documents also indicated that there are no plans to just treat high-profile users the same as the rest of the unwashed masses. One of the memos noted that the company does not “have systems built out to do that extra diligence for all integrity actions that can occur for a VIP.” Facebook seems to be trying not to take steps that could anger influential individuals using its platform.

That Facebook would blatantly lie to the public and its own Oversight Board is sleazy, but not surprising. Moreover, it is also not a shock that the company would treat celebrities and politicians with kid gloves while subjecting the rest of us to censorship based on political bias. There are two motivators here: Politics and profit.

In general, Facebook tends to favor content created for a left-leaning audience. Its fact-checkers are decidedly partisan and tend to focus primarily on squashing right-leaning posts. It suspended Trump for two years under the guise of trying to eliminate incitements to violence. But the reality is that the company engages in this censorious activity because they have an agenda to serve.

Additionally, Facebook doesn’t want to risk angering the rich and famous individuals using its platform because they could decide to use a different social media company. They don’t want to lose those clicks and advertising dollars. In essence, this wouldn’t be as big an issue if they were honest about it. But, that’s not the world we live in now, is it?