If you’re thinking about getting a flu shot this year, you might want to do some rethinking

If it’s too late and you’ve already got one or have been doing so regularly for years, you might want to take a deep breath.

The information you’re about to receive may cause outrage.

Most people who get a flu vaccine probably assume that it’s going to… you know… prevent them from getting the flu. I mean, why would you go through the trouble and incur the risk of a serious adverse reaction that attends taking any vaccine if you didn’t think it would be effective?

But, as I alerted our readers to a few months back, when people like Anthony Fauci hawk some treatment as effective, it doesn’t mean quite what you think.

A Washington Post article I cited made the shocking claims that the flu vaccine only “clocks in most years at 40% to 60% effective,” while the FDA will only “require a coronavirus vaccine to be 50% effective.”

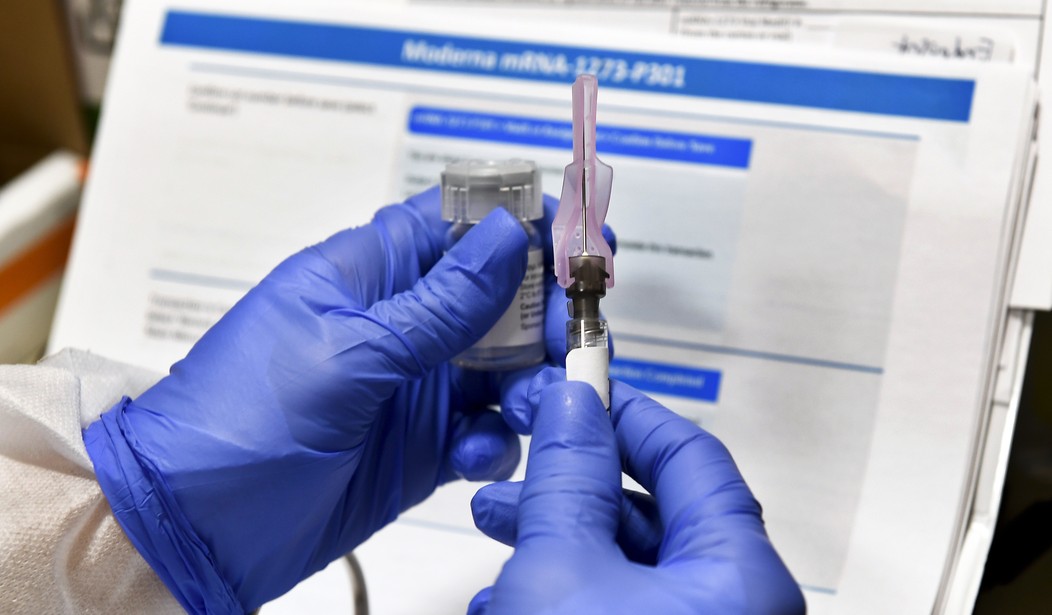

As I later reported, it turns out the low 50% bar the FDA is setting for effectiveness is just one among many very troubling facts that nobody’s telling you about that COVID-19 vaccine the government will soon be insisting we all start lining up for the second it’s available.

But it turns out that, as lousy as the rate they cited for the flu vaccine was, the Washington Post had, nonetheless, greatly exaggerated its effectiveness.

If the CDC’s own figures are to be believed, over the prior 15 flu seasons, the flu vaccine only reached the low bar of being 40% effective nine times. It’s been as low as a jaw-dropping 10% – meaning 9 out of 10 people who got vaccinated that year got zero protection from the flu – but never gone above 60%.

The average effectiveness for the past 15 flu seasons turns out to be only 40%.

On the CDC’s own figures, the flu vaccine failed to do anything for 6 out of 10 people who went to the trouble of getting it in the last 15 years.

Q: What product with a 60% failure rate do US officials aggressively push?

A: The flu vaccine!

The CDC says average effectiveness for past 15 seasons is 40%. It's been as low as 10%.

If it were a toaster, they'd make sure we know.

But it's only a vaccine so who cares, right? pic.twitter.com/isfPEoMULc— Michael Thau (@MichaelThau) October 16, 2020

Moreover, as bad as these figures are, there’s some reason to think they might be intentionally inflated.

When determining a vaccine’s effectiveness, researchers compare the ratio of those who received a vaccination among a random group of infected people with their ratio among a random group of uninfected people. Those in the latter group are called the control subjects.

The studies for the 2004-05, 2005-06, and 2006-07 flu vaccines yielded two different effectiveness rates for each. One was obtained using what the authors call “traditional” control subjects, that is, healthy people from the general population. Whereas the second set of effectiveness rates were obtained using patients who were ill but not with influenza, whom the authors called “test-negative” control subjects.

In all subsequent flu seasons, it appears that traditional subjects were abandoned and only test-negative control subjects were used. And for those three seasons for which rates were obtained using both, the CDC chose the figures that came from test-negative subjects and ignored the ones derived using traditional subjects.

Using test-negative subjects, however, caused flu vaccines to get significantly higher effectiveness ratings. The 10% rating for the 2004-05 vaccine dropped all the way down to 5% when traditional subjects were used. That would mean only 1 out of 20 people who got vaccinated that year wound up with any immunity to the flu for the trouble, expense, and risk they incurred.

The 21% rating for the 2005-06 flu vaccine dropped down to 10% when the traditional method was used. The 52% rating for the 2006-07 vaccine dropped to 37%.

But the authors of the study for those three seasons explicitly say that “using test-negative control subjects is a new approach with little precedent” and that test-negative subjects, though similar in some respects, “likely differ from the source population in other ways that affect generalizability.”

Moreover, even the study done on the 2015-16 flu vaccine still notes that “the test-negative design is still comparatively new. This design may be subject to biases that are not fully understood.”

But if that was still true in 2016, why was the use of traditional control subjects discontinued in 2007 in favor of test-negative subjects?

It’s hard not to suspect that the higher vaccine effectiveness ratings that resulted might be the answer.

After all, even the numbers the CDC got using test-negative controls are awful. Even if they’re correct, on average over the last 15 years the flu vaccine failed to do anything for 6 out of 10 people who got it.

You’re lucky in any given year if it’s 50% effective and there’d be nothing at all unusual if 75% of Americans who decide to get vaccinated wind up with no immunity for their trouble.

The CDC aggressively pushes flu vaccines and virtually none of those foolish enough to trust them have a clue that there’s a better than even chance the vaccine they’re relying on to keep them healthy isn’t going to do squat.

So it’s hardly a stretch to suppose that traditional controls were abandoned in favor of a method that researchers admit may have unknown biases because it consistently yielded somewhat less dismal effectiveness ratings.

But dismal they are nonetheless.

Whatever it is that the CDC is up to, it doesn’t seem like it has anything at all to do with disease control.